The $18 Million Hidden Cost of Not Modernizing Your Enterprise Systems

Updated On:

May 15, 2026

Everyone's racing to build an AI strategy. Speed creates the illusion of progress, but it doesn't guarantee advantage. The real cost shows up later in what your infrastructure can't actually support.

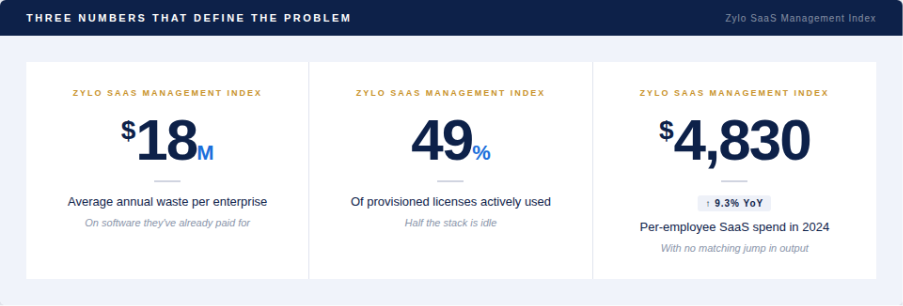

Read these three numbers together.

That is neither a vendor nor a procurement problem. That is what an organization looks like when it has been buying technology faster than it has been governing it and the bill has finally gotten too large to ignore.

Here is what makes it worse: most leadership teams are having entirely the wrong conversation about it. The strategy decks are full of model comparisons, vendor evaluations, build-versus-buy frameworks. All of it downstream of the actual problem. None of it touching the foundational layer where the real damage is sitting.

The organizations that will extract durable value from AI are not the ones with the largest budgets or the most sophisticated models. They are the ones that had the discipline and honestly, the courage to look at what they already own, cut what they don't need, and build on something solid before they started building on top of it.

Everyone else is just adding complexity to complexity and calling it a strategy.

01 - The instinct that's costing you

When budgets tighten, the instinct makes sense. Find a better tool. Bring in a new platform. Add the capability that's missing. So you add an app, then another, and another and each one solves something. Until it doesn't.

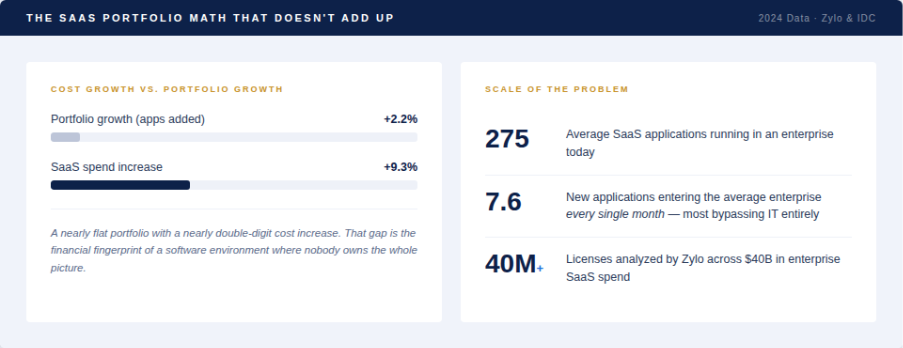

Then you look at the stack and the math gets uncomfortable.

A nearly flat portfolio with a nearly double-digit cost increase. That gap is not a coincidence but the financial fingerprint of a software environment where nobody is actually accountable for the whole picture.

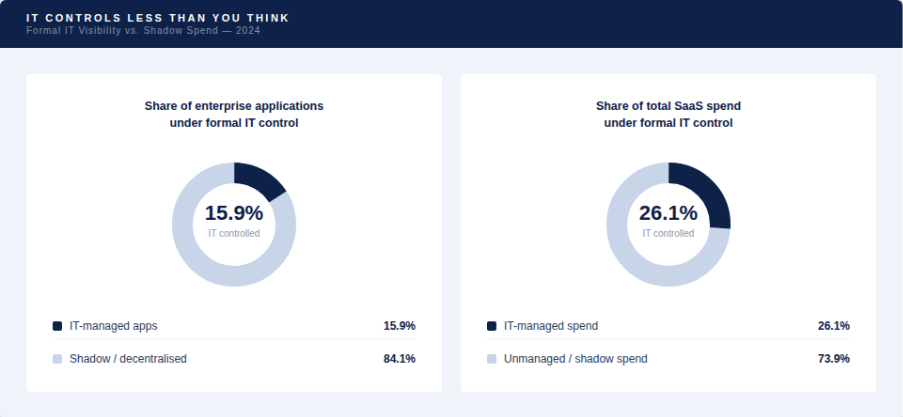

Zylo's analysis of 40 million licenses and $40 billion in spend found that 7.6 new applications enter the average enterprise environment every single month - most of them expensed directly by employees, bypassing IT entirely. The spend is real. The oversight isn't.

Getting SaaS visibility wrong isn’t just an efficiency concern. At scale, it turns into a governance liability, a security exposure, and a budget that nobody can actually defend - particularly when 30% of what you’re paying for, according to Gartner, is delivering zero value.

02 - The architecture is the problem

The default approach produces something familiar: each team picks the best tool for their job, procures it independently, and moves on. No integration layer. No architecture review. Every purchase made in isolation from the decisions happening twenty meters away.

In the early days this works fine. At scale, the cost problem stops being abstract.

Take a scenario that plays out in thousands of enterprises right now. Marketing runs one platform for email campaigns. Sales runs a separate tool for outbound sequences. Customer success uses a third for lifecycle messaging. Three licenses. Three vendor contracts. Three renewal cycles. And all three teams are communicating with the same customer - without any of them knowing what the others said last week.

Key Insight:

McKinsey documented the extreme version of this: one financial services firm was running 7 different payment systems and 20 custom applications doing identical work. Engineers in these environments spend up to 30% of their development time just making redundant systems talk to each other. You're not just paying for duplicate tools. You're paying your most expensive people to maintain the plumbing between them.

The pilot looks fine. Production is where things break and by then the architecture is already too embedded to change easily.

How Much of Enterprise Software Spend Is Actually Wasted?

Gartner estimates 30% of enterprise software spend delivers zero measurable value. Zylo’s analysis of 40 million licenses and $40B in SaaS spend shows 7.6 new applications enter the average enterprise each month, often bypassing IT, creating a software portfolio where costs rise but usable value stays flat.

The real issue is software sprawl overhead - engineers spend 30% of their time integrating redundant systems, compliance risk grows from untracked tools, and AI strategy execution breaks down due to fragmented, untrusted data infrastructure.

03 - What the actual cost looks like

Think of it like carrying debt you haven't fully accounted for. The licenses are the principal. The integration overhead, the engineering drag, the audit exposure - that's the interest. And it compounds.

60% of CIOs say their tech debt increased over the last three years. Not stabilized. Increased.

Organizations paid more than $1 million in software audit fines over three years, largely because nobody had accurate visibility into what was actually deployed. That's the operational consequence of letting procurement outpace governance, not bad luck.

"Enabling employee-led purchasing of software without oversight has become unsustainable, resulting in unmanageable budgets and significant risks."

- Eric Christopher, CEO, Zylo

The former CIO of a major cloud provider described what happened when that organization finally tackled the structural problem directly: they went from 75% of engineer time consumed by maintenance overhead down to 25%. That was not from hiring more engineers. It was from retiring the debt systematically. Three quarters of the most expensive human capital in the building freed up by an architectural decision.

04 - Why most organizations haven't fixed this yet

There are two reasons and neither has anything to do with capability.

First: the early pain isn't visible. When you're running thirty or fifty applications, the waste feels manageable. It only becomes undeniable at two hundred and seventy-five, when the budget impact is obvious and the stack is already too embedded to rationalize quickly.

Second: proper rationalization is genuinely hard. Pointing a team at a new tool is simple. Auditing everything you own, retiring redundant systems, consolidating overlapping capabilities, and rebuilding governance around what remains - that is a serious organizational problem. The kind that takes real leadership commitment to push through, because somebody somewhere will always claim they need the tool you're trying to cut.

The organizations that haven't fixed this yet aren't incompetent. They're just dealing with a problem that was never visible enough to demand urgency - until AI made the infrastructure underneath it impossible to ignore.

What Should Companies Fix Before Investing Heavily in AI?

Three things, in order.

First, the data layer: if teams spend 60–70% of time locating and cleaning data, AI adoption prerequisites aren’t met - models inherit fragmentation and produce untrusted outputs.

Second, the application stack: rationalize before adding; removing redundant tools frees engineering time and governance visibility for AI initiatives.

Third, ownership: every AI initiative needs a named owner accountable beyond launch, not a sponsor who exits after the pilot.

Ignoring these is why adding AI tools reduces business performance. The infrastructure has to come first, because everything built on top either compounds it or fights it.

05 - The AI reality check

The AI spending surge is real, but what's underneath it is mostly unexamined.

Here's what almost nobody is saying clearly enough: You cannot build a coherent AI strategy on top of an incoherent data infrastructure. You're proposing to feed models data that consumed 60 to 70% of someone's working hours to locate and sanitise then wondering why the outputs require so much review before anyone trusts them.

A European bank that rationalized its data infrastructure cut reporting volume by 80% and costs by 60%. More importantly, it created the conditions under which AI tooling could actually work against clean, trustworthy data rather than fighting through years of accumulated mess.

Bessemer's research suggests AI-native companies will reach $1 billion in ARR 50% faster than their cloud predecessors. The cost of getting the system wrong will scale right alongside the opportunity - not independently of it.

06 - What you should actually be tracking

Most technology business cases get approved on capability benchmarks - which model performs best, which platform has the most features. The real cost - integration overhead, idle licenses, engineering drag, audit exposure, data governance debt - rarely makes it into the same deck. So the ROI gap isn't surprising. The full cost was never accounted for in the first place.

The number that matters is not how many applications your organization owns. It's what percentage of them maps to a traceable business outcome right now. If that number isn't clearly above 50%, you have a structural problem.

Conclusion: Three verdicts, one principle

1 - Sprawl is not scale.

Running 275 applications with 49% utilization and no central visibility is not a mature technology environment. It is accumulated procurement masquerading as capability. Better tools delay the cost problem. They don't solve it.

2 - The data layer is the AI strategy.

There is no shortcut here. Organizations that invest in stack rationalization, integration discipline, and data governance now are building the infrastructure that makes AI investment return something real. Everyone else is adding more tools to a foundation that cannot support the weight.

3 - The advantage is structural, not technological.

The organizations building durable advantages in AI are not running the most powerful models or the largest portfolios. They are the ones who figured out that clean data, rationalized infrastructure, and governed spend are the actual prerequisites - and built the systems to make that happen before the AI spending wave hit.

"Organizations of all sizes must embrace SaaS Management by investing in team resources, tools, and processes to not only control expenses but also capitalize on opportunities for innovation. SaaS Management becomes mission critical as AI permeates the software landscape."

- Scott Dorsey, High Alpha